Enhancing Driving Visibility via Semantic-Guided Knowledge Distillation Framework for Adverse Weather Removal

[Paper] [Code]

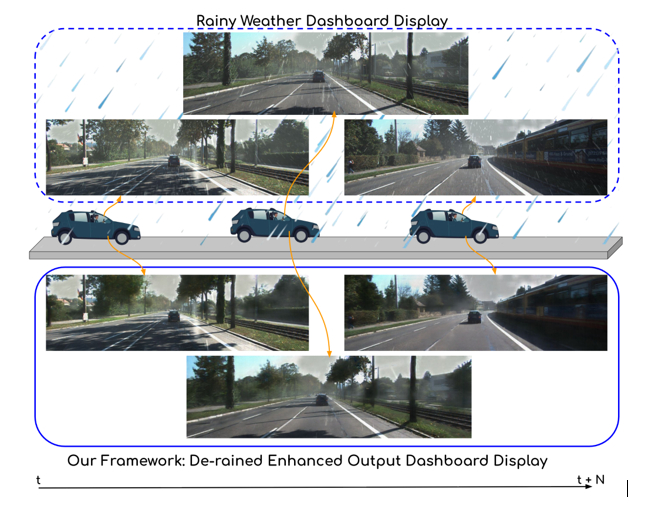

Figure1. Illustration of our framework on ADAS vehicle in a rainy driving scenario for Adverse Weather Removal.

Abstract

Adverse weather such as rain, haze, and low light severely degrades visual perception in Advanced Driver Assistance Systems (ADAS) and autonomous driving, leading to degraded scene understanding and increased safety risks. We propose a unified, semantic-guided knowledge distillation restoration framework that addresses multi- weather removal while preserving semantics. Our method employs a semantic-guided dual-decoder architecture trained via two-stage multi-teacher knowledge distillation, transferring expertise from multiple high-capacity models into a lightweight student model. Segmentation-aware contrastive learning further aligns low-level restoration with high-level semantic structure, enabling robust detection of roads, vehicles, and pedestrians under challenging conditions. Trained on a mix of synthetic and real-world data with segmentation-guided feature refinement, our framework gener- alises effectively to real-world unseen environments. Extensive experiments on multiple benchmarks show competitive or superior performance to state-of-the-art methods, with real-time inference suitable for edge deployment. This makes our approach well-suited for safety-critical perception in autonomous and semi-autonomous systems operating in adverse outdoor environments.

Methodology

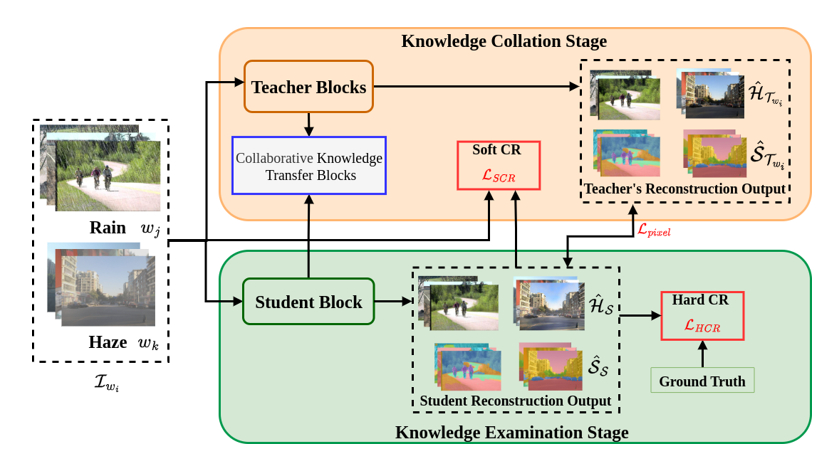

Our method builds a unified lightweight student model for adverse weather removal by distilling knowledge from multiple weather-specific teacher networks. The key idea is to combine image restoration with semantic guidance, so the model not only improves visibility but also preserves important scene structures such as roads, vehicles, and pedestrians.

Figure2. Overview of our semantic-guided knowledge distil- lation framework. Collaborative distillation from multiple weather-specific teachers (rain, haze) to a unified student via two stages: (1) Knowledge Collation, where soft alignment with teacher reconstructions and segmentation priors, and (2) Knowledge Examination, where enforcing ground-truth consistency with hard constraints. Segmentation maps provide region-aware supervision, emphasizing critical structures like roads and vehicles.

At inference time, only the lightweight student model is used. This makes the framework efficient, memory-friendly, and suitable for real-time deployment without requiring any teacher model during testing.

QUANTITATIVE RESULTS

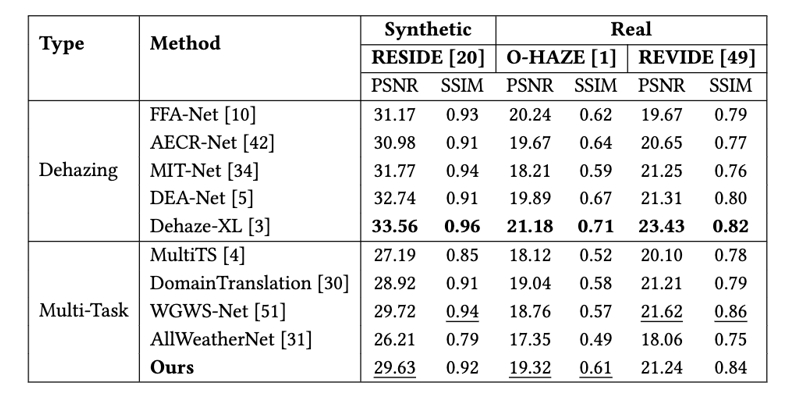

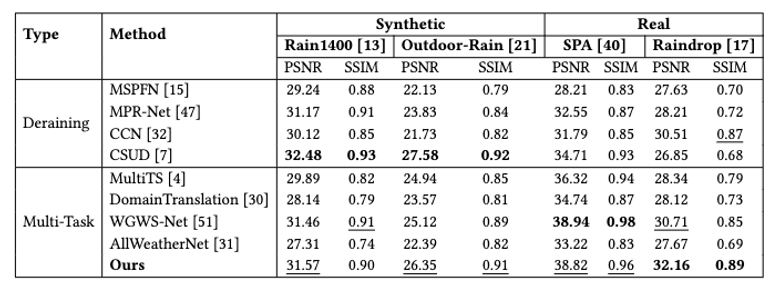

Our method achieves strong performance across both synthetic and real-world adverse weather datasets, showing consistent improvements in restoration quality while preserving semantic structure.

Table 1:Quantitative evaluation of image dehazing performance on synthetic and real datasets.

Table 1:Quantitative evaluation of image dehazing performance on synthetic and real datasets.

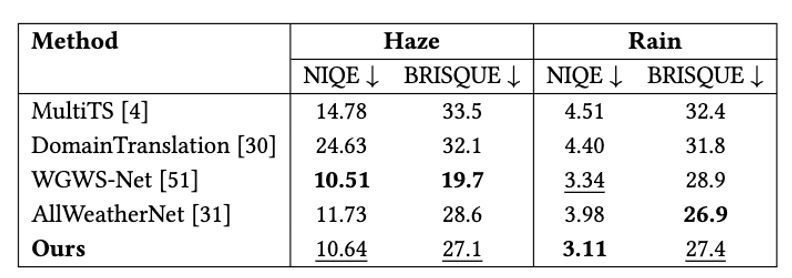

Table 3:Quantitative comparison on real-world derained and dehazed images from IDD-AW using NIQE and BRISQUE.

QUALITATIVE RESULTS

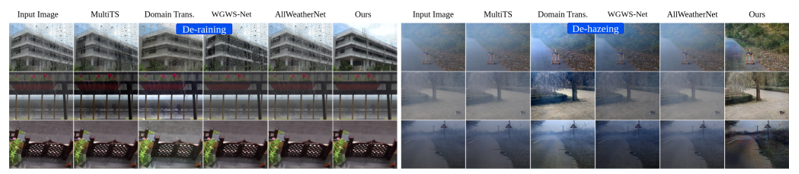

Figure 3: Visual comparison of the proposed and existing methods from real datasets (Raindrop, SPA ,O-HAZE ) for multi-weather restoration. The image can be zoomed in for improved visualization.

Figure 4: Visual comparison of the proposed and existing methods from synthetic datasets (Outdoor-Rain , RESIDE) for multi-weather restoration. The image can be zoomed in for improved visualization.

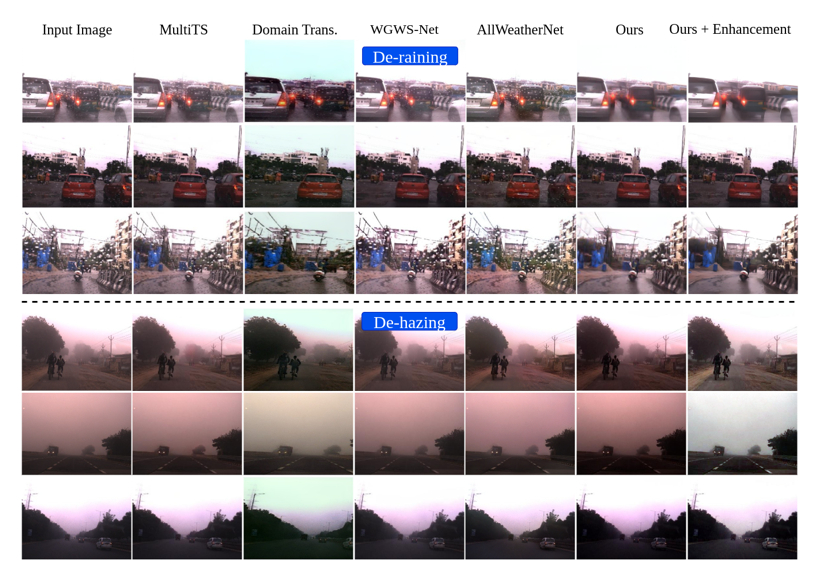

Figure 5: Qualitative comparison of real-world rain and haze images with low-light from IDD-AW dataset.

Citation

@in proceedings{Enhancing2025icvgip,

author = {Hanvitha Saraswathi Mukkamala, Shankar Gangisetty, Ananya Kulkarni, Veera Ganesh Yalla and C. V. Jawahar}, title = {Enhancing Driving Visibility via Semantic-Guided Knowledge Distillation Framework for Adverse Weather Removal},

booktitle = {},

series = {},

volume = {},

pages = {},

publisher = {},

year = {2025},

}

Acknowledgements

This work is supported by iHub-Data and Mobility at IIIT Hyderabad.