DriveSafe: A Framework for Risk Detection and Safety Suggestions in Driving Scenarios

,

[Paper] [Code] [Dataset]

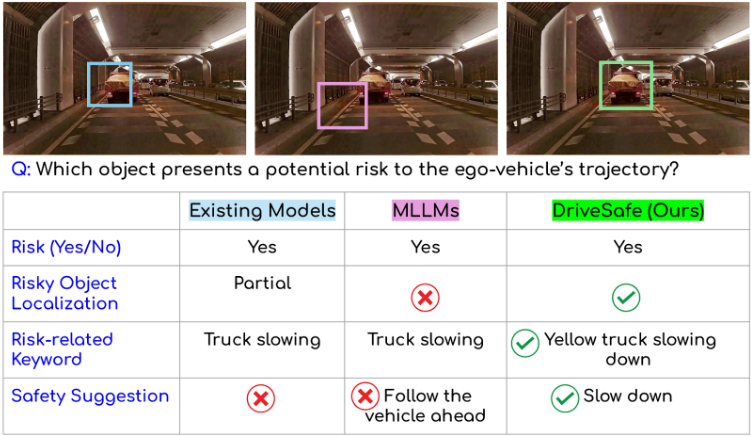

Fig: Previous works in driving scenarios primarily address risk perception but fall short of offering actionable safety guidance. Similarly, general-purpose MLLMs (Qwen2.5-VL, LLaVA-NeXT, VideoLLaMA3) are still unreliable in this regard. In contrast, our approach, DriveSafe, integrates risk assessment with clear, human-understandable safety suggestions.

Abstract

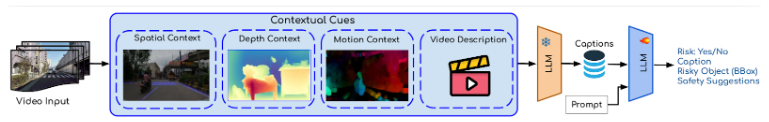

Comprehensive situational awareness is essential for autonomous vehicles operating in safety-critical environ-ments, as it enables the identification and mitigation of potential risks. Although recent Multimodal Large Language Models (MLLMs) have shown promise on general vision–language tasks, our findings indicate that zero-shot MLLMs still under- perform compared to domain-specific methods in fine-grained, spatially grounded risk assessment. To address this gap, we propose DriveSafe, a framework for risk-aware scene under- standing that leverages structured natural language descrip- tions. Specifically, our method first generates spatially grounded captions enriched with multimodal context—including motion, spatial, and depth cues. These captions are then used for downstream risk assessment, explicitly identifying hazardous objects, their locations, and the unsafe behaviors they imply, followed by actionable safety suggestions. To further improve performance, we employ caption–risk pairings to fine-tune a lightweight adapter module, efficiently injecting domain-specific knowledge into the base LLM. By conditioning risk assessment on explicit language-based scene representations, DriveSafe achieves significant gains over both zero-shot MLLMs and prior domain-specific baselines. Exhaustive experiments on the DRAMA benchmark demonstrate state-of-the-art performance, while ablation studies validate the effectiveness of our key design choices. We plan to release our codebase to support future research.

Methodology

Fig: Our proposed DriveSafe framework for the caption generation and safety suggestion task in driving. We first derive contextual cues to guide caption generation, and then use the resulting captions for risk assessment and safety suggestion.

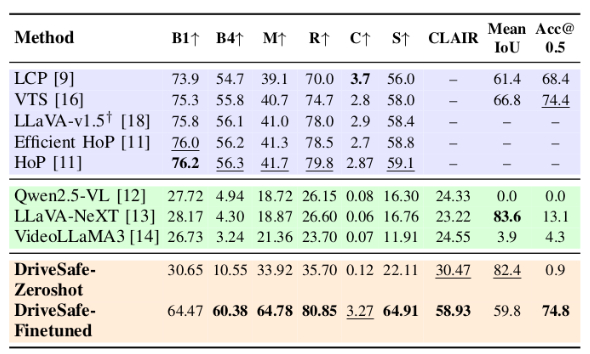

Results

Table: Performance comparison of Caption Generation and Risky Object Grounding across Existing Methods, General VLMs, and DriveSafe on the DRAMA dataset.

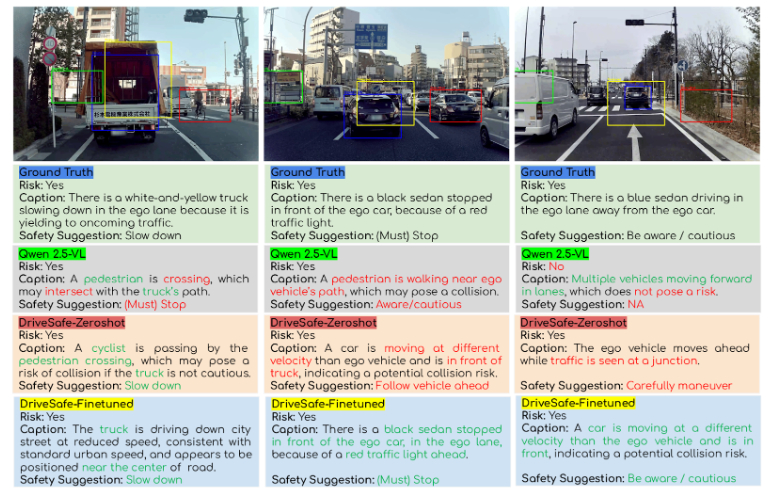

Fig: Qualitative comparison of DriveSafe-ZeroShot, DriveSafe-Finetuned, and Qwen2.5-VL on three driving scenarios from the DRAMA dataset. Risky object grounding is shown with bounding boxes so are respective models with text highlighting, while generated captions and safety suggestions are marked as correct (green) or incorrect (red).

Citation

@in proceedings{drivesafe2026icra,

author = {Sainithin Artham, Shankar Gangisetty, Avijit Dasgupta, and C. V. Jawahar},

title = {DriveSafe: A Framework for Risk Detection and Safety Suggestions in Driving Scenarios},

booktitle = {},

series = {},

volume = {},

pages = {},

publisher = {},

year = {2026},

}

Acknowledgements

This work is supported by iHub-Data and Mobility at IIIT Hyderabad.