MOTOR: A Multimodal Dataset for Two-Wheeler Rider Behavior Understanding

[Paper] [Code] [Dataset] [Project Webpage]

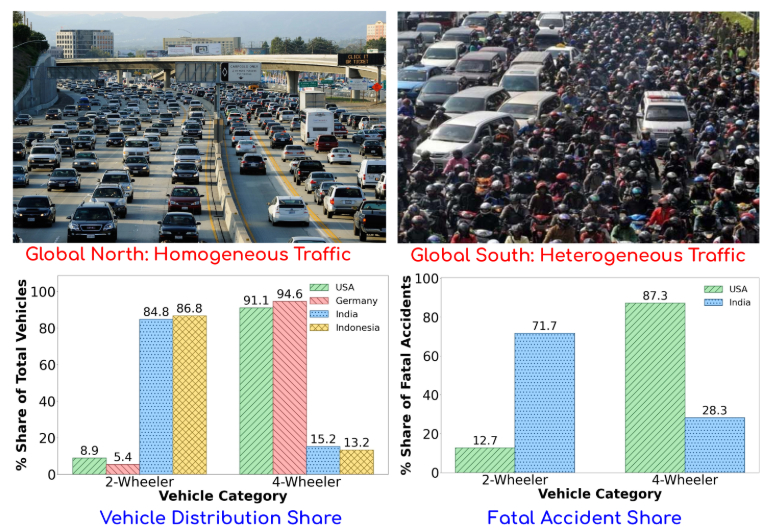

Fig: Comparison of traffic contexts and accident statistics across the Global North and South. Top Row: Four-wheelers dominating in the USA vs Two-wheelers in India. Bottom Row: Distribution of vehicles (two-wheeler vs four-wheeler) and fatal accidents across North and South.

Abstract

Two-wheelers account for a disproportionately high share of road fatalities in the Global South. Research on rider behavior, however, lags far behind four-wheelers, where multimodal datasets have driven major advances in Advanced Driver Assistance Systems (ADAS). To address this gap, we present the MOtorized TwO-wheeler Rider (MOTOR) dataset, the first large-scale, multi-view, multimodal resource dedicated to two-wheelers in dense, unstructured traffic. MOTOR com- prises 2,500 sequences (25+ hours) collected from 16 riders and integrates synchronized front, rear, and helmet videos, rider eye-gaze from wearable trackers, on-road audio, and telemetry (GPS, accelerometer, gyroscope). Rich annotations capture traf- fic context, rider state, 12 riding maneuvers spanning conven- tional and unconventional behaviors, and legality labels (Legal, Illegal, Unspecified). We benchmark rider behavior recognition and maneuver legality classification using state-of-the-art video action recognition backbones (CNN and Transformer-based), extended with multimodal fusion, and find that combining RGB, gaze, and telemetry consistently yields the best performance. MOTOR thus provides a unique foundation for advancing safety-critical understanding of two-wheeler riding. It offers the research community a benchmark to develop and evaluate models for behavior analysis, legality-aware prediction, and intelligent transportation systems. Dataset and code will be made publicly available.

The MOTOR Dataset

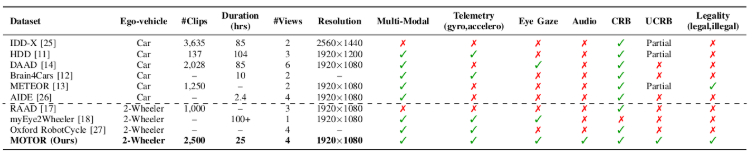

Table: Comparison of 4-wheeler and 2-wheeler behavior datasets. Our dataset is unique as it contains multi-modal, multi-view videos from ego-vehicle and helmet, eye gaze, as well as annotated conventional and unconventional behaviors, and legality-related riding scenarios. Note: CRB indicates conventional riding behaviors, and UCRB means unconventional riding behaviors.

Fig: Data samples helmet-view. (a) Ego-rider weaves through dense, slow traffic, overtaking multiple vehicles across lanes. (b) Rider squeezes through a narrow gap between a bus and a car, narrowly avoiding the bus. (c) Rider rides in the wrong lane against dense oncoming traffic, disrupting flow. (d) Rider turns head fully toward a roadside building, diverting gaze from the road amid fast-moving traffic.

Results

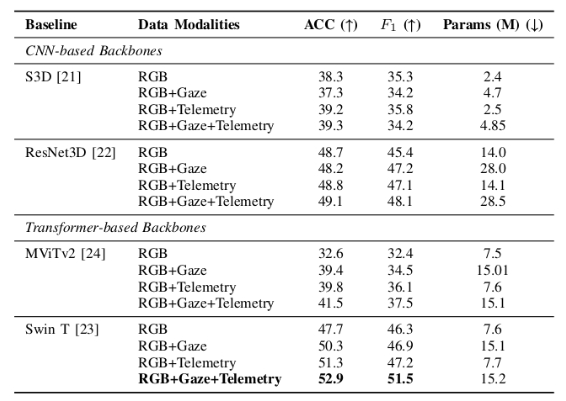

Table: Rider Behavior Classification. Comparison of CNN and Transformer-based baselines on MOTOR dataset across different modality combinations.

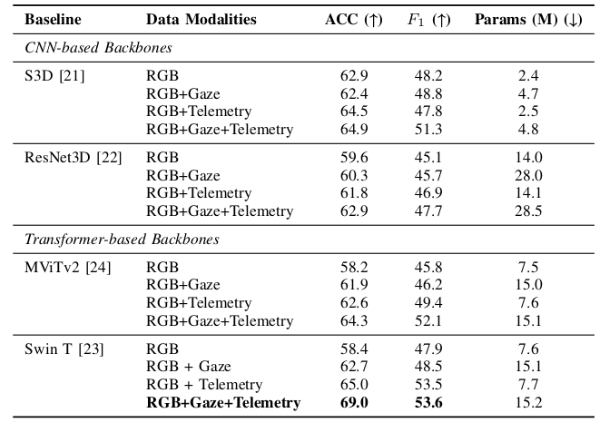

Table: Rider Legality Classification: CNN and Transformer-based baselines on MOTOR dataset across different modality combinations.

Citation

@in proceedings{motor2026icra,

author = {Varun Paturkar, Shankar Gangisetty, and C. V. Jawahar},

title = {MOTOR: A Multimodal Dataset for Two-Wheeler Rider Behaviour Understanding},

booktitle = {},

series = {},

volume = {},

pages = {},

publisher = {},

year = {2026},

}

Acknowledgements

This work is supported by iHub-Data and Mobility at IIIT Hyderabad.