Efficient Image Retrieval Methods For Large Scale Dynamic Image Databases

Suman Karthik

The commoditization of imaging hardware has led to an exponential growth in image and video data, making it difficult to access relevant data when it is required. This has led to a great amount of research into multimedia retrieval and Content Based Image Retrieval (CBIR) in particular. Yet, CBIR has not found widespread acceptance in real world systems. One of the primary reasons for this is the inability of traditional CBIR systems to scale effectively to Large Scale image databases. The introduction of the Bag of Words model for image retrieval has changed some of these issues for the better, yet bottlenecks remain and their utility is limited when it comes to Highly Dynamic image databases (image databases where the set of images is constantly changing). In this thesis, we focus on developing methods that address the scalability issues of traditional CBIR systems and adaptability issues of Bag of Words based image retrieval systems.

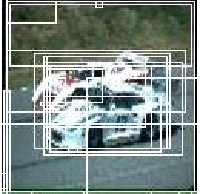

Traditional CBIR systems find relevant images by finding nearest neighbors in a high dimensional feature space. This is computationally expensive, and does not scale as the number of images in the database grow. We address this problem by posing the image retrieval problem as a text retrieval task. We do this by transforming the images into text documents called the Virtual Textual Description (VTD). Once this transformation is done, we further enhance the performance of the system by incorporating a novel relevance feedback algorithm called discriminative relevance feedback. Then we use the virtual textual description of images to index and retrieve images efficiently using a data structure called the Elastic Bucket Trie(EBT).

Traditional CBIR systems find relevant images by finding nearest neighbors in a high dimensional feature space. This is computationally expensive, and does not scale as the number of images in the database grow. We address this problem by posing the image retrieval problem as a text retrieval task. We do this by transforming the images into text documents called the Virtual Textual Description (VTD). Once this transformation is done, we further enhance the performance of the system by incorporating a novel relevance feedback algorithm called discriminative relevance feedback. Then we use the virtual textual description of images to index and retrieve images efficiently using a data structure called the Elastic Bucket Trie(EBT).

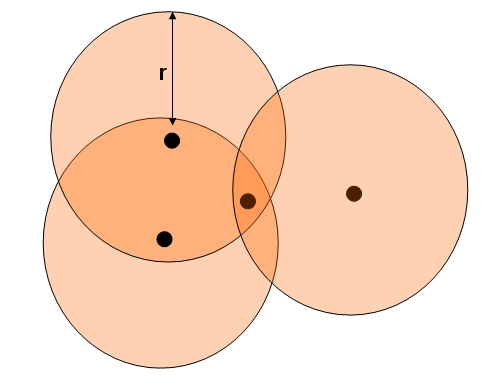

Contemporary bag of visual words approaches to image retrieval perform one-time offline vector quantization to create the visual vocabulary. However, these methods do not adapt well to dynamic image databases whose nature constantly changes as new data is added. In this thesis, we design, present and examine with experiments a novel method for incremental vector quantization(IVQ) to be used in image and video retrieval systems with dynamic databases.

Contemporary bag of visual words approaches to image retrieval perform one-time offline vector quantization to create the visual vocabulary. However, these methods do not adapt well to dynamic image databases whose nature constantly changes as new data is added. In this thesis, we design, present and examine with experiments a novel method for incremental vector quantization(IVQ) to be used in image and video retrieval systems with dynamic databases.

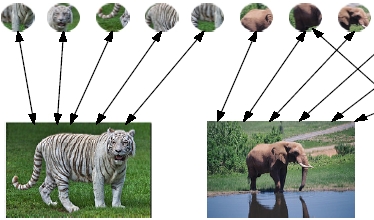

Semantic indexing has been invaluable in improving the performance of bag of words based image retrieval systems. However, contemporary approaches to semantic indexing for bag of words image retrieval do not adapt well to dynamic image databases. We introduce and examine with experiments a bipartite graph model (BGM), which is a scalable datastructure that aids in on-line semantic indexing and a cash flow algorithm that works on the BGM to retrieve semantically relevant images from the database. We also demonstrate how traditional text search engines can beused to build scalable image retrieval systems.

Semantic indexing has been invaluable in improving the performance of bag of words based image retrieval systems. However, contemporary approaches to semantic indexing for bag of words image retrieval do not adapt well to dynamic image databases. We introduce and examine with experiments a bipartite graph model (BGM), which is a scalable datastructure that aids in on-line semantic indexing and a cash flow algorithm that works on the BGM to retrieve semantically relevant images from the database. We also demonstrate how traditional text search engines can beused to build scalable image retrieval systems.

| Year of completion: | July 2009 |

| Advisor : | C. V. Jawahar |

Related Publications

Suman Karthik, Chandrika Pulla and C.V. Jawahar - Incremental Online Semantic Indexing for Image Retrieval in Dynamic Databases Proceedings of International Workshop on Semantic Learning Applications in Multimedia (SLAM: CVPR 2009), 20-25 June 2009, Miami, Florida, USA. [PDF]

- Suman Karthik, C.V. Jawahar - Analysis of Relevance Feedback in Content Based Image Retrieval, Proceedings of the 9th International Conference on Control, Automation, Robotics and Vision (ICARCV), 2006, Singapore. [PDF]

- Suman Karthik, C.V. Jawahar, Virtual Textual Representation for Efficient Image Retrieval, Proceedings of the 3rd International Conference on Visual Information Engineering(VIE), 26-28 September 2006 in Bangalore, India. [PDF]

- Suman Karthik, C.V. Jawahar, Effecient Region Based Indexing and Retrieval for Images with Elastic Bucket Tries, Proceedings of the International Conference on Pattern Recognition(ICPR), 2006. [PDF]