Learning to Hash-tag Videos with Tag2Vec

Abstract

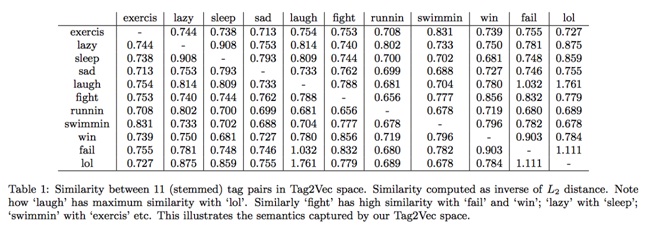

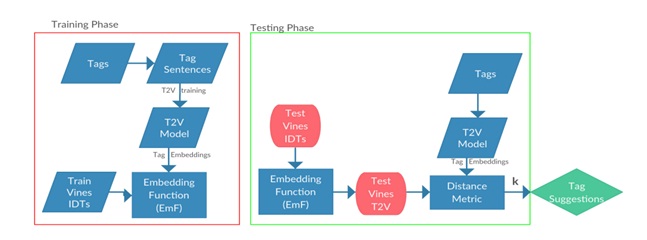

User-given tags or labels are valuable resources for semanticunderstanding of visual media such as images and videos. Recently, a new type of labeling mechanism known as hashtagshave become increasingly popular on social media sites. In this paper, we study the problem of generating relevantand useful hash-tags for short video clips. Traditional data driven approaches for tag enrichment and recommendationuse direct visual similarity for label transfer and propagation. We attempt to learn a direct low-cost mapping fromvideo to hash-tags using a two step training. We first employa natural language processing (NLP) technique, Skiagram models with neural network training to learn a low dimensional vector representation of hash-tags (Tag2Vec)using a corpus of ∼10 million hash-tags. We then trainan embedding function to map video features to the low dimensional Tag2vec space. We learn this embedding for 29categories of short video clips withhash-tags. A query videowithout any tag-information can then be directly mappedto the vector space of tags using the learned embedding andrelevant tags can be found by performing a simple nearestneighbor retrieval in the Tag2Vec space. We validate therelevance of the tags suggested by our system qualitativelyand quantitatively with user study.

Aim

The distribution of video we are targeting is vine.co. These are recorded by the users under unconstrained environment. These videos contain significant camera shakes, lighting variability, abrupt shots etc. We try to use the folksonomies associated by the uploaders to create a vector space. This Tag2Vec space is trained using ~2million hash tags downloaded for ~15000 categories. The main motivation to create a plug and use system for categorising vines.

Contribution

- We create a system for easily tagging vines

- We provide training sentences comprised of hashtags, and also vines+hash tags for test categories

Dataset

| Name | Link | Description |

| Code.tar | Link | Code for running the main classification along with other utility programs |

| Vine.tar | Link | Download link for vines |

| HashTags.tar | Link | Training Hashtags |

| Paper.pdf | Link | GCPR submission |

| Supplementary | Link | Supplementary Submission |

Approach

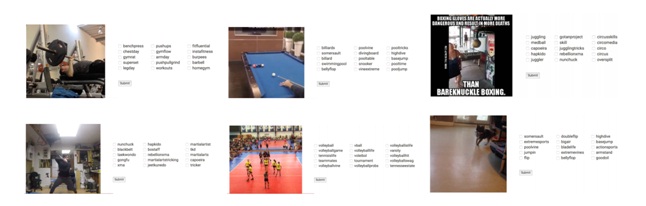

Qualitative Results

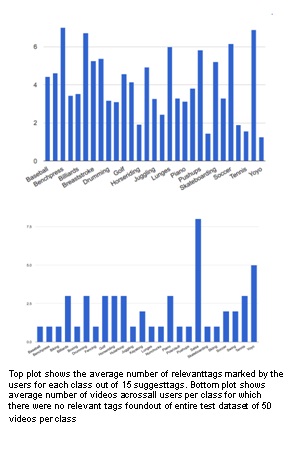

We conduct a user study where a user is presented with a video and 15 suggested tags by our system. The user marks the relevant tags, in the end we compute the average number of relevant tags across classes.

Associated People

- Aditya Singh

- Saurabh Saini

- Rajvi Shah

- P J Narayanan