Enhancing Weak Biometric Authentication by Adaptation and Improved User-Discrimination

Vandana Roy

Biometric technologies are becoming the foundation of an extensive array of person identification and verification solutions. Biometrics is defined as the science of recognising a person based on certain physiological (fingerprints, face, hand-geometry) or behavioral (voice, gait, keystrokes) characteristics. Weak biometrics (hand-geometry, face, voice) are the traits which possess low discriminating content; they change over time for each individual. Thus they show lower performance as compared to the strong biometrics (eg. fingerprints, iris, retina, etc.). Due to exponentially decreasing costs of the hardware and computations, biometrics has found immense use in civilian applications (Time and Attendance Monitoring, Physical Access to Building, Human-Computer Interface, etc.) other than the forensics ones (e.g. criminal and terrorist identification). Various factors come into picture while selecting biometric traits for civilian applications, most important of which are user psychology and acceptability. Most of the weak biometric traits have little or no association with criminal history as against fingerprints (a strong biometric); data acquisition is also very simple and easy with weak biometrics. Due to these reasons, weak biometric traits are often better accented for civilian applications than the strong biometric traits. Moreover, not much research has gone into this area as compared to strong biometrics.

Due to the low discriminating content of the weak biometric traits, they result in poor performance of verification. We propose a feature selection technique called Single Class Hierarchical Discriminant Analysis (SCHDA) specifically for authentication purpose in biometric systems. The SCDHA recursively identifies the samples which overlap with the samples of the claimed identity in the discriminant space built by the single-class discriminant criterion. If samples of claimed identity are termed ``positive'' samples, and all the other samples ``negative'' samples, the single-class discriminant criterion finds an optimal transformation such that the ratio of the negative scatter with respect to positive mean over the positive within-class scatter is maximized, thereby pulling together the positive samples and pushing the negative samples away from the positive mean. Thus SCHDA results in building an optimal user-specific discriminant space for each individual where the samples of the claimed identity are well-separated from the samples of all the other users. Performance of authentication using this technique is compared with the other popular existing discriminant analysis techniques in the literature and significant improvement has been observed.

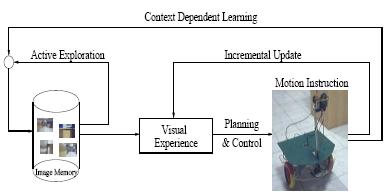

The second problem which leads to low accuracy of authentication is the poor stability of permanence of weak biometric traits due to various reasons (eg. ageing, the person gaining or losing weight, etc.). Civilian applications usually operate in cooperative or monitored mode wherein the users can give feedback to the system on occurrence of any errors. An intelligent adaptive framework is proposed which uses the feedback to incrementally update the parameters of the feature selection and verification framework for the individuals. This technique does not require the system to be re-trained to address the issue of changing features.

The third factor which has been explored to improve the performance of authentication for civilian applications is the pattern of participation of the enrolled users. As the new users are enrolled into the system, a degradation is observed in performance due to the increasing number of users. Traditionally, it is required to re-train the system periodically with the existing users to take care of this issue. An interesting observation is that although the number of users enrolled into the system can be very high, the number of users which regularly participate in the authentication process is comparatively low. Thus, modeling the variation in participating population helps to bypass the re-training process. We propose to model the variation in participating population using the Markov models. Using these models, the prior probability of participation of each individual is computed and incorporated into the traditional feature selection framework, providing more relevance to the parameters of regularly participating users. Both the structured and unstructured modes of variation of participation were explored. Experiments were conducted on varied datasets, verifying our claim that incorporating prior probability of participation helps to improve performance of a biometric system over time.

In order to validate our claims and techniques, we used hand-geometry and keystrokes-based biometric traits. The hand-images were acquired using a simple low-cost setup consisting of a digital camera and a flat translucent platform with five rigid pegs (to assure that the images acquired are well-aligned). The platform is illuminated from beneath so as to simplify the preprocessing of the acquired images. The features used for hand-geometry includes lengths of four fingers, and widths at five equidistant points on each finger. Features of thumb are not used as these measurements for thumb show high variability for the same user. This dataset was used to validate the proposed feature selection technique. For keystrokes-based biometrics, the features used were the dwell time (duration of key-press event) and flight time (duration between key-release and next key-press events)of each key, and the number of times backspace and delete key were pressed. Data was collected from subjects who were not accustomed to a particular kind of keyboard (French Keyboard). The features extracted from this dataset were time-varying and was used to validate the concept of incremental updation.

In this thesis, we identify and address some of the issues which lead to low performance of authentication using certain weak biometric traits. We also look into the problem of low performance of authentication in large-scale biometrics for civilian applications.

| Year of completion: | 2007 |

| Advisor : | C. V. Jawahar |

Related Publications

Vandana Roy and C. V. Jawahar - Modeling Time-Varying Population for Biometric Authentication In International Conference on computing: Theory and Applications(ICCTA), Kolkatta, 2007. [PDF]

Vandana Roy and C. V. Jawahar, - Hand-Geometry Based Person Authentication Using Incremental Biased Discriminant Analysis, Proceedings of the National Conference on Communication(NCC 2006), Jan 2006 Delhi, January 2006, pp 261-265. [PDF]

Vandana Roy and C. V. Jawahar, - Feature Selection for Hand-Geometry based Person Authentication, Proceedings of the Thirteenth International Conference on Advanced Computing and Communications (ICACCS), Coimbatore, December 2005. [PDF]

Downloads

![]()