Panoramic Stereo Videos Using a Single Camera

Abstract

We present a practical solution for generating 360° stereo panoramic videos using a single camera. Current approaches either use a moving camera that captures multiple images of a scene, which are then stitched together to form the final panorama, or use multiple cameras that are synchronized. A moving camera limits the solution to static scenes, while multi-camera solutions require dedicated calibrated setups. Our approach improves upon the existing solutions in two significant ways: It solves the problem using a single camera, thus minimizing the calibration problem and providing us the ability to convert any digital camera into a panoramic stereo capture device. It captures all the light rays required for stereo panoramas in a single frame using a compact custom designed mirror, thus making the design practical to manufacture and easier to use. We analyze several properties of the design as well as present panoramic stereo and depth estimation results.

Primary Challenges

- To capture all the light rays corresponding to both eyes' views without causing blind spots or occlusions in the panoramas created.

- To design an optical system which is not bulky, easy to calibrate and use, as well as simple to manufacture.

- To be able to capture 360° stereo panoramas using a single digital camera for immersive human experience.

- To be able to perceive depth correctly from the generated stereo panoramas.

Major Contributions

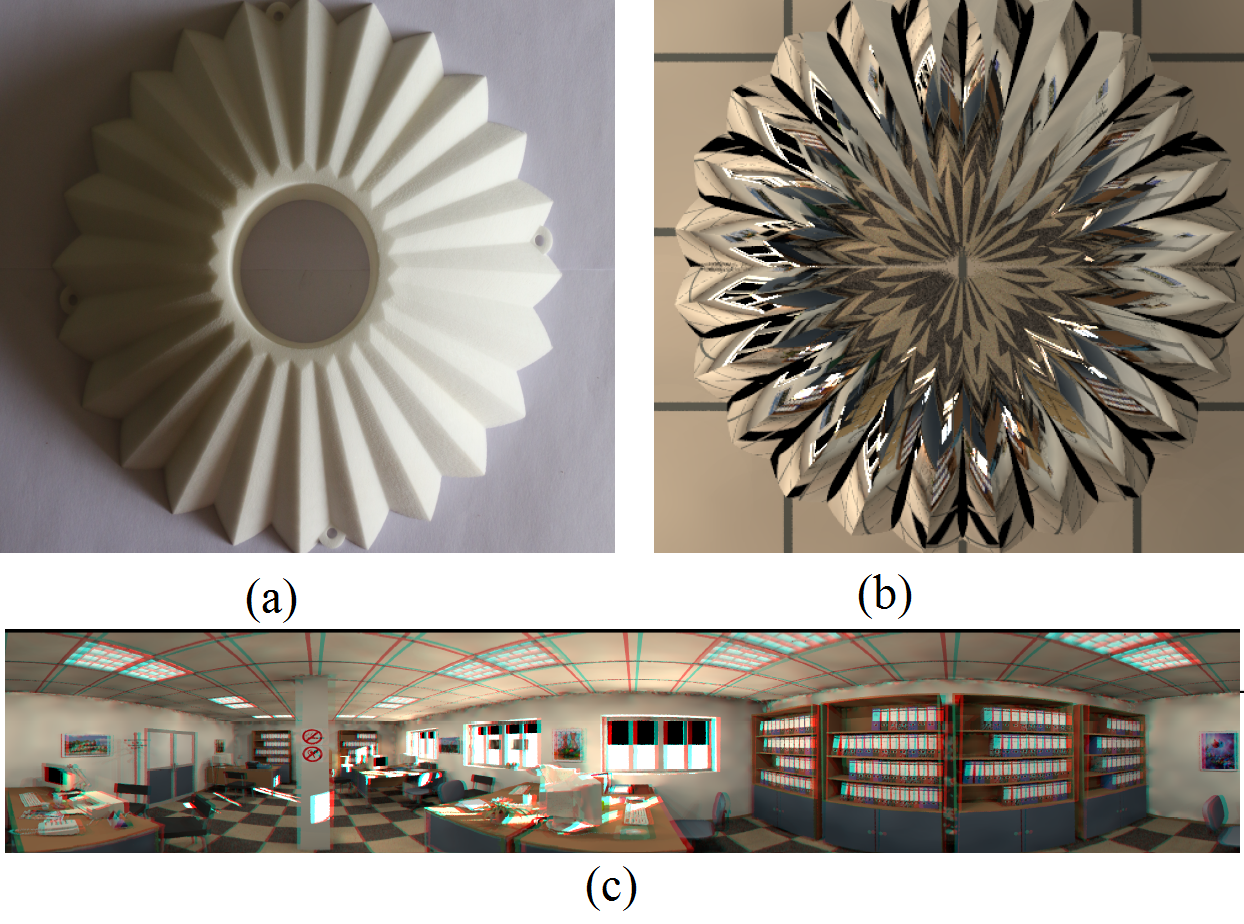

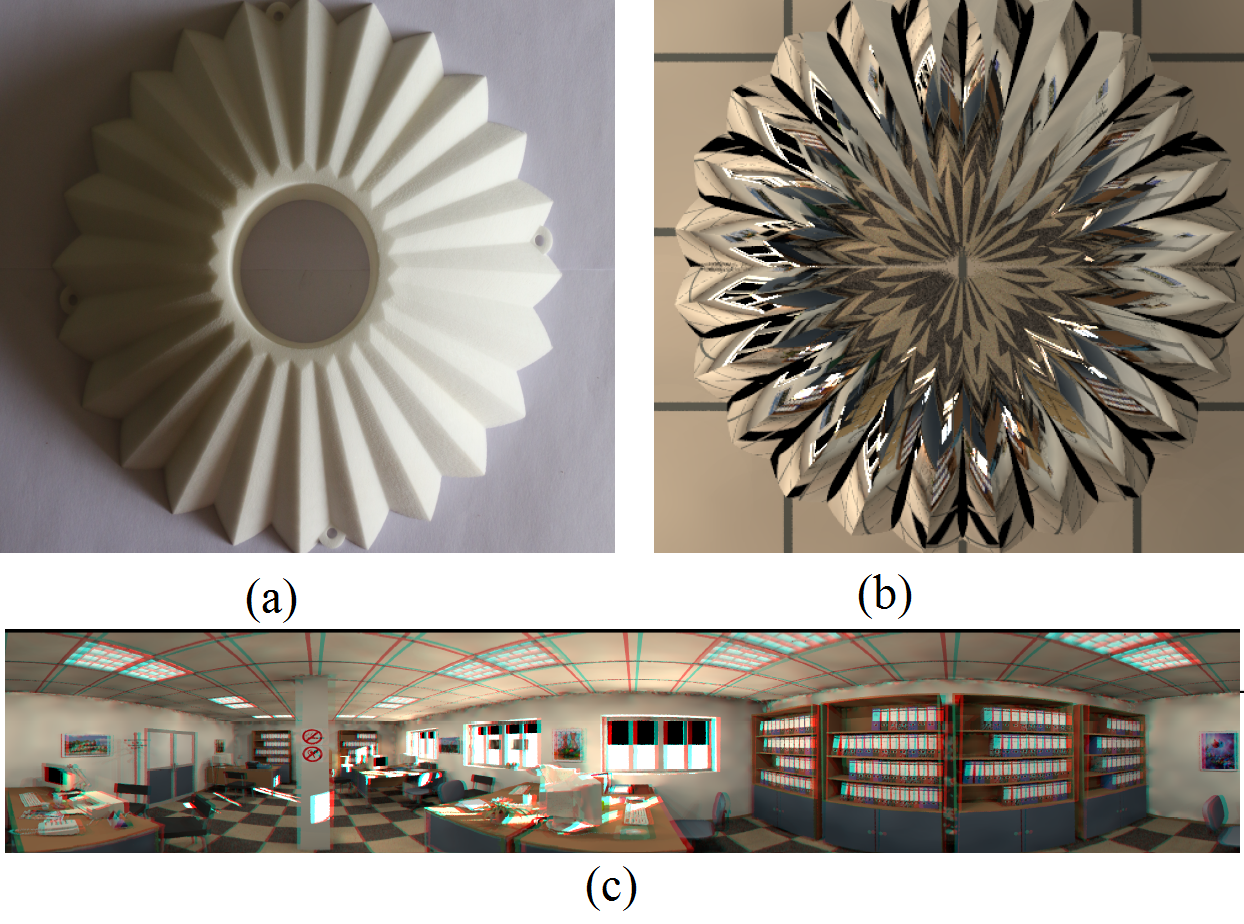

- We proposed a custom designed mirror surface which we cal as "coffee-filter mirror", for generating 360° stereo panoramas. Our optical system has the following advantages over the other stereo panoramic devices:

- Simplicity of Data Acquisition

- Ease of Calibration and Post Processing

- Adaptability to Various Applications:

- We have optimised the surface equations of the mirror, and calibrated it to avoid any visual mis-perceptions in 3D like virtual parallax or mis-alignments.

- Our design is easy to manufacture and the size can be scaled up/ down according to the application. the resolution of the created panoramas improves with the sensor.

- While designed with human consumption in mind, the stereo pairs could also be used for depth estimation.

Datasets

We used PovRay, a freely available ray tracing software which accurately simulates imaging by tracing rays through a given scene. We have used 3D scene datasets listed below to demonstrate how the proposed mirror is used to create stereo panoramas.

The datasets used for the simulation can be downloaded from the following links:

Please mail us at {This email address is being protected from spambots. You need JavaScript enabled to view it., This email address is being protected from spambots. You need JavaScript enabled to view it.}@research.iiit.ac.in for any queries.

Results

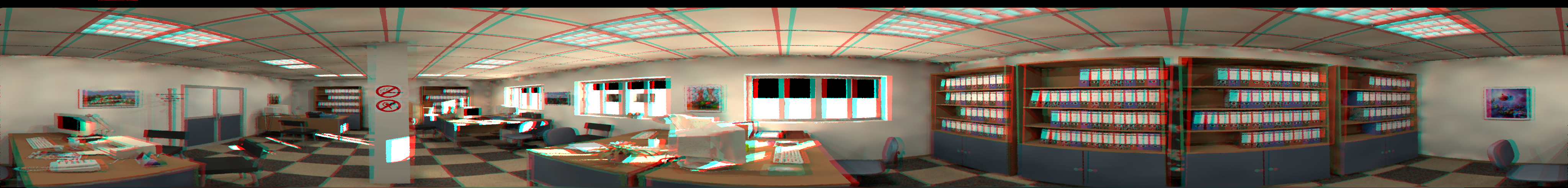

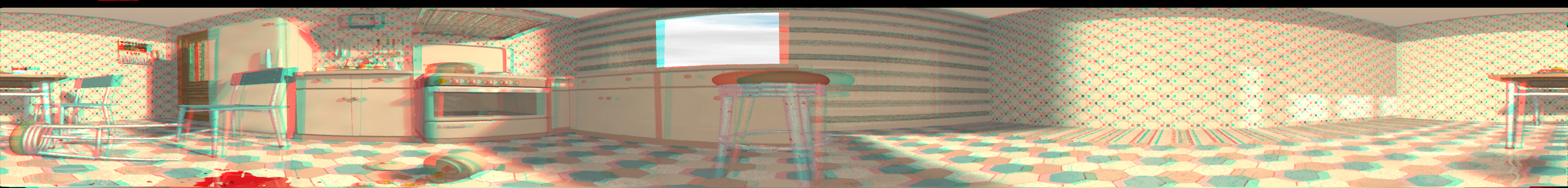

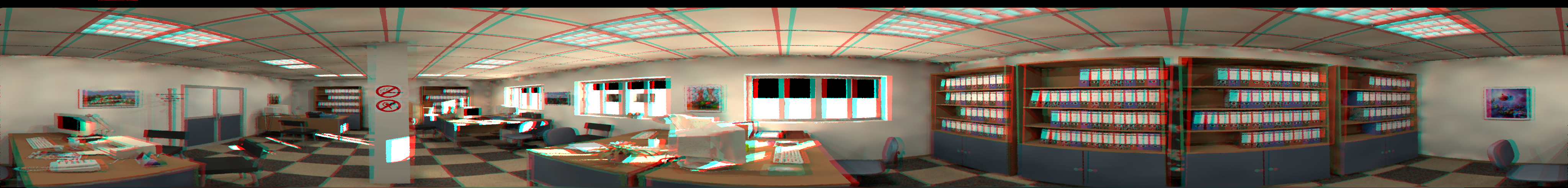

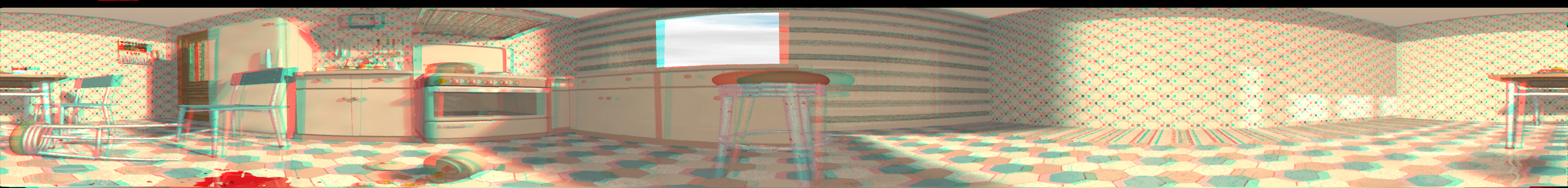

Red-Cyan anaglyph panoramas obtained by using the proposed set up using POVRay datasets.

360° stereo view of the Patio Scene captured using coffee-filter mirror. The scene can be seen using any HMD. More videos will be added soon.

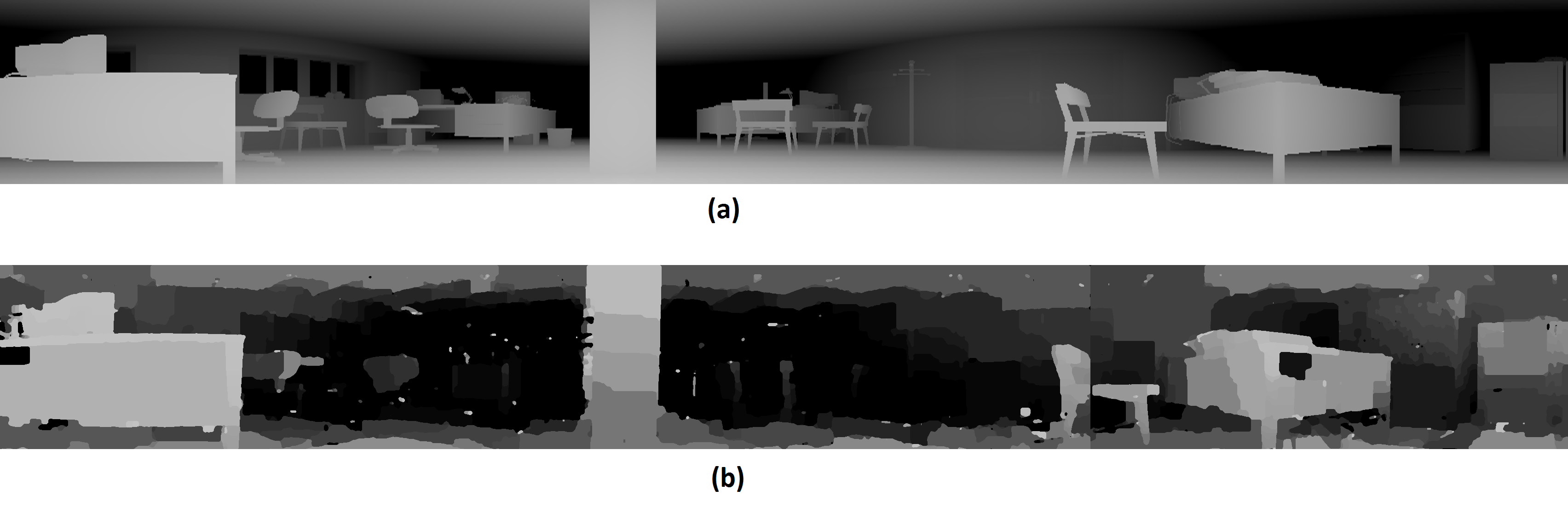

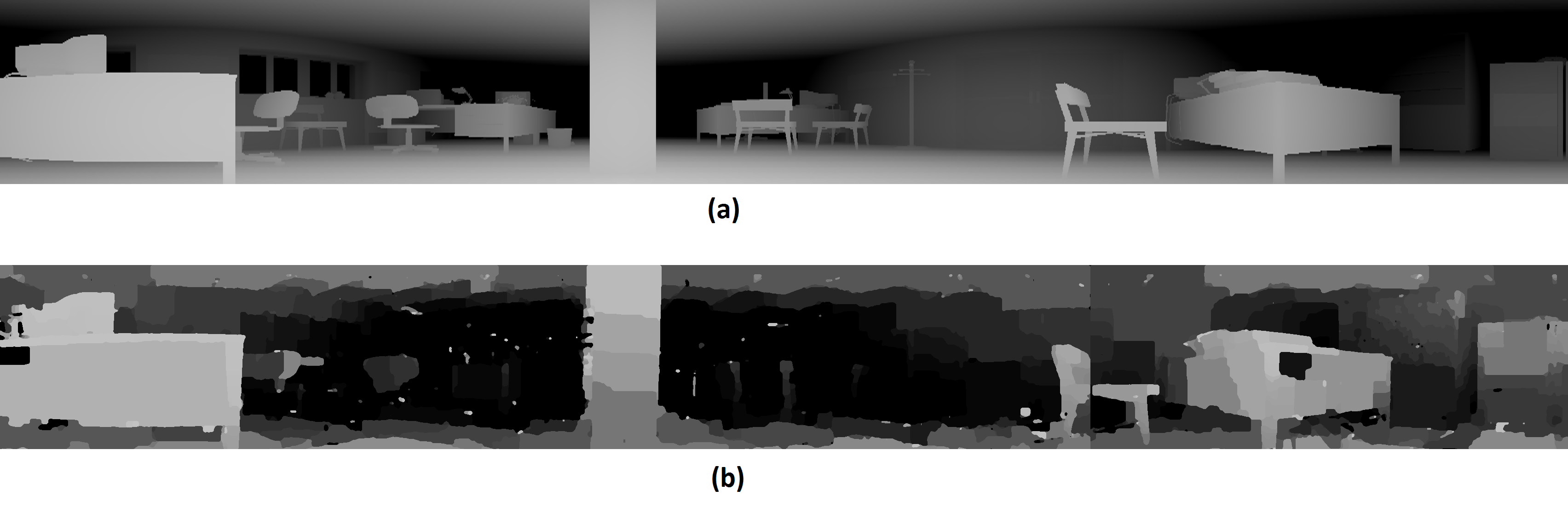

Comparison of reconstructed depth map as obtained using the proposed set up (b) with the ground truth depth map (a)

Please visit PanoStereo for more videos and results.

Related Publications

Rajat Aggarwal*, Amrisha Vohra*, Anoop M. Namboodiri - Panoramic Stereo Videos Using A Single Camera, IEEE Conference on Computer Vision & Pattern Recognition (CVPR), 26 June-1st July 2016. [PDF]

Associated People